Home » MergeMeter » Articles

Building MergeMeter in Public: Pre-MVP

I am a fan and supporter of the #buildInPublic trend. Rome wasn’t built in a day, and neither was any meaningful software product. To some degree, the build in public trend is even more important than ever as a signal that what you are shipping is not haphazardly built AI slop thrown together in a weekend. Real products take time, thought, and iteration. So I wanted to tell you about what I am building and where I currently am in the process.

The project that I am spending most of my development time on lately is MergeMeter. It is a software engineering metrics platform designed to help leaders use data to better understand the flow of work through their teams and have conversations about reducing bottlenecks.

This project is also scratching an itch for me personally. In past roles, I spent countless hours obsessing over the data I could glean from various systems about my teams. It helped me put data behind my gut feelings about where bottlenecks were and led to some great conversations with team members and stakeholders about the state of the department.

I think metrics can be very powerful, but it is important to keep in mind that they are best when used to seed conversations and not treated as the whole story. Metrics should help leaders understand systems, not judge individuals.

Over the years, I often ended up building my own small tools or scripts to gather some of this information because the commercial tools on the market were either extremely expensive or focused on measuring individual productivity instead of understanding system flow. MergeMeter is an attempt to make those types of insights more accessible.

Technology Stack

The major technology pieces are written in:

- Next.js

- React

- MUI components

- Postgres

- GitHub Actions and OAuth

- Stripe

- Hosting on Vercel

How MergeMeter Works

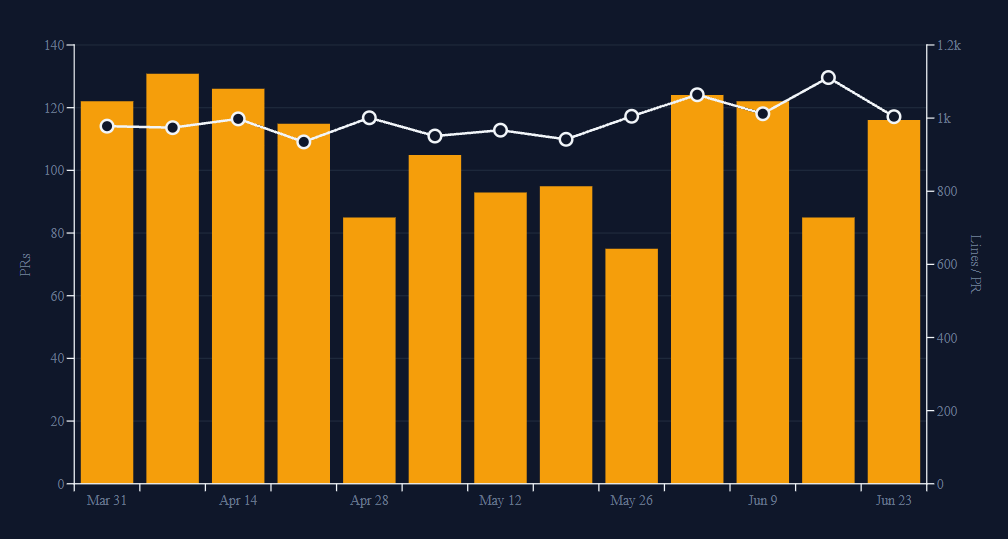

As of now, the product focuses on the data that can be gleaned from pull request metadata, along with optional surveys related to the pull request.

The metrics are gathered via a GitHub Action added to a code repository. The action is triggered when a pull request is merged, sending meaningful metadata, never source code, to MergeMeter.

If configured, surveys regarding things such as confidence level, risk potential, and AI usage are sent to the pull request author and pull request approvers.

Users can then log into MergeMeter using their GitHub login to view metrics about the pull requests in their organization.

I cannot overstate how valuable pull request data is on its own. It can seed great conversations by providing context about many aspects of the software development process, including delivery patterns, review behavior, and change size.

Metrics Available Today

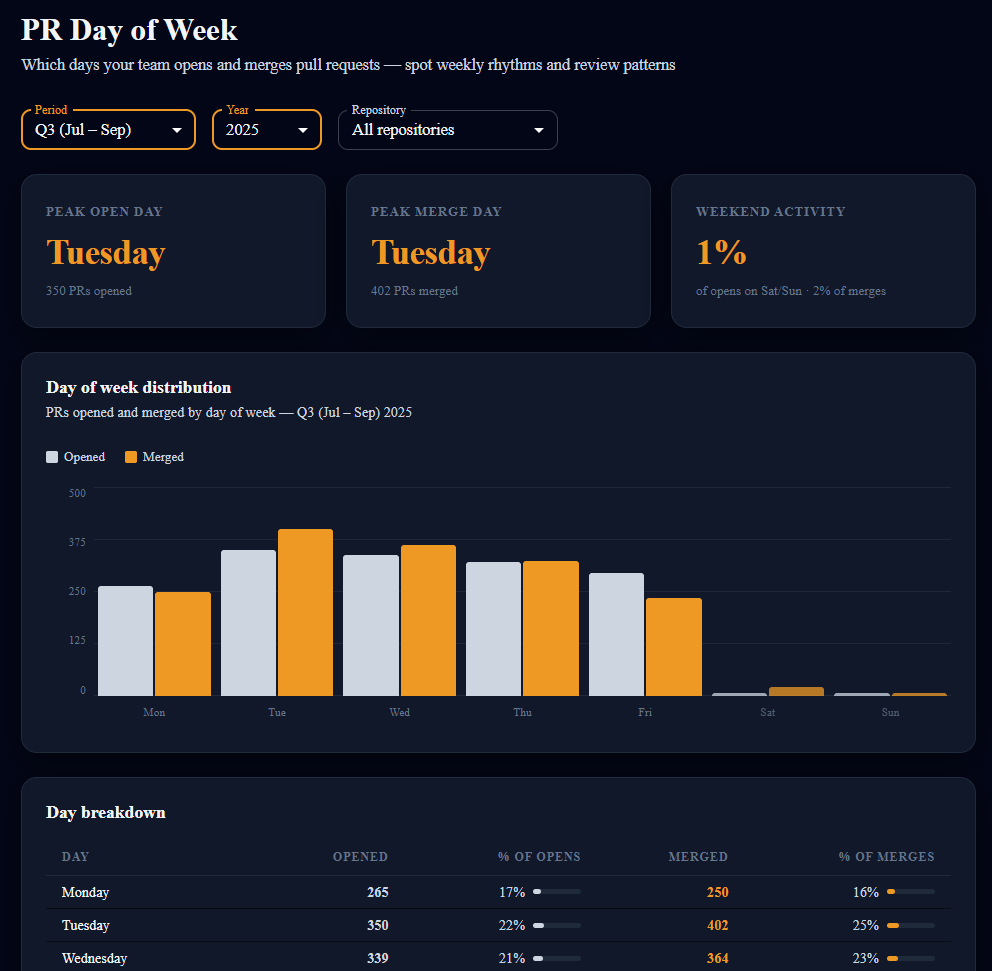

The current metrics available include:

- Pull request throughput

Slicable by month, quarter, week, and committer - Pull request size

- Pull request merge confidence

- Days of the week when most pull requests are opened and closed

- Pull request cycle time

- Pull request approver and reviewer counts

- Files changed per pull request

- Time until first pull request feedback

- and more.

Historical Data Import

To help provide context on day one, there is a feature that allows users to pull historical pull request data from GitHub. This allows teams to establish context for the data they are seeing and begin observing trends immediately instead of waiting months for new data to accumulate.

The Metrics That Still Matter at the Dawn of the AI Era

There has been a lot of discussion lately about measuring AI usage and AI return on investment inside engineering organizations. I hear it in conversations with peers, at conferences, and across many of the articles and podcasts circulating in the industry. It makes sense that leadership teams and boards are interested in it. AI is clearly changing how software is written.

That said, the industry is still figuring out what meaningful measurement actually looks like. Counting prompts, tracking tool usage, acceptance rate of AI suggestions, or estimating the percentage of AI generated code does not necessarily tell you whether your engineering system is actually improving. Usage without context does not say very much.

If a team is generating large amounts of AI assisted code but that code shows up as massive pull requests that take days to review, then the net impact on the system may not actually be positive. In some cases the bottleneck simply moves somewhere else in the development process.

You still need to track the flow of work through the system to provide context around its impact. Is the team deliverying more work? Are reviews happening faster? Are bottlenecks improving or shifting to new places?

This is where pull request flow metrics become incredibly valuable. Pull requests sit at the end of the software development assembly line. They track when work starts, when collaboration occurs, and when code is committed to the repository.

Even if AI agents eventually write a majority of the code, teams will still rely on pull requests and reviews to move work from idea to merged code

Pricing Philosophy

Unlike many existing tools in this space, MergeMeter will have billing tiers based on the number of pull requests processed per month rather than charging per developer.

Most engineering metrics tools are priced based on the number of users or committers in the system. I have always found that model somewhat frustrating since it penalizes teams simply for having more engineers. Pricing based on pull request volume feels more aligned with the actual usage of the system.

What Is Still Being Built

Some of the additional features on the horizon, to be developed either before or after launch, depending on feedback from users, include:

- GitHub Action and OAuth approval in the GitHub Marketplace

- Generating monthly reports sent to teams and admins via email

- Additional metrics

- AI usage data for some of the available AI code generation tools

- Surveys delivered via email

- Gathering data when pull requests are opened to track additional lifecycle events

- An associated Slack app and or browser extension

- KPI tracking

- Comparisons between your team data and industry averages

- and more.

Where Things Stand

MergeMeter is getting close to a state where it could go live, but not until more discussions are had with potential users to ensure it is meaningful and valuable to my fellow engineering leaders.

That is part of the reason I am sharing this in public. If you are an engineering leader who thinks about these types of metrics, I would love to hear what kinds of signals or insights you wish you had better visibility into.